Beyond Page Speed: What AI Systems Actually Need

When I audit websites for GEO readiness, I consistently find the same pattern: teams that invested heavily in technical performance, sub-second load times, perfect Core Web Vitals, clean mobile rendering, but built structures that AI systems find genuinely difficult to work with. The pages are fast. They are not citable.

Page speed matters for traditional search and user experience. It still matters. But a GEO-ready website is built around a different set of requirements: can an AI system understand who this site is about, what it claims expertise in, and whether any given section can be used as a reliable citation? These are structural questions, not performance questions. And they require a different kind of audit.

Rima Taha, Global SEO & GEO Advisor, has spent the past several years working with enterprises and institutional clients on GEO readiness assessments. What follows is the framework used in those assessments, not an exhaustive checklist, but the areas where the highest-leverage improvements consistently appear.

Technical Foundations: Schema, Structure, and Crawlability

The technical layer of GEO readiness is simpler than it first appears. It does not require a complete rebuild, it requires targeted additions and corrections to what is already there. The three areas that matter most are structured data, crawlability for AI bots, and server-side rendering.

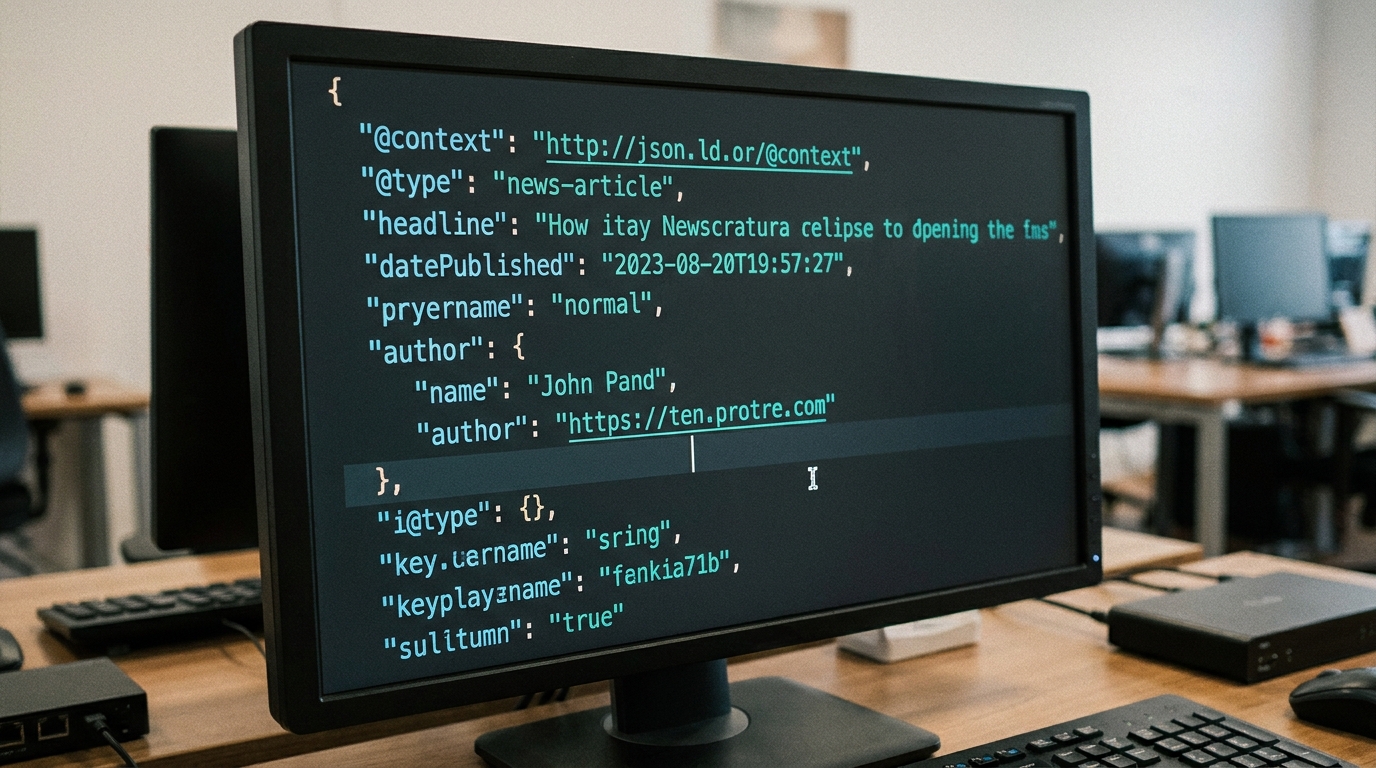

Structured data, specifically JSON-LD schema markup, is the clearest signal a website can send to an AI system about who it is, what it does, and how its content should be understood. A Person schema with a complete sameAs array linking to LinkedIn, Wikidata, and other authoritative sources tells an AI system not just that this page mentions a person, but that this person has a verified identity across the web. That confidence directly affects citation probability.

Crawlability for AI systems is a newer concern. Major AI platforms, including GPTBot, ClaudeBot, PerplexityBot, have their own crawlers, and they respect robots.txt. Many websites accidentally block these bots through broad disallow rules or legacy firewall settings that treat unfamiliar user agents as threats. The first step in a GEO readiness audit is simply checking that your robots.txt file explicitly permits the crawlers that matter, and that your CDN and edge security rules are not silently blocking them.

Server-side rendering is the third foundation. Websites that render content entirely through client-side JavaScript are genuinely difficult for some AI crawlers to process reliably. If your key content pages, services, about, case studies, insights articles, depend on JavaScript to render their primary content, a significant portion of that content may simply not be visible to certain AI systems. This is one of the most important conversations I have with engineering teams during GEO readiness assessments.

"A GEO-ready website does not need to be rebuilt. It needs to be understood, which requires knowing what AI systems can and cannot see within it."

Rima TahaContent Readiness: Passage Structure and Entity Consistency

Technical foundations create the conditions for AI citability. Content readiness determines whether that citability is actually realised. The most common content issue I find in GEO audits is what I call entity drift, the same person, service, or concept referenced under multiple different labels across the same website. One page calls the service "SEO consulting." Another calls it "search engine optimisation advisory." A third describes "digital visibility strategy." To a human reader, these are obviously the same thing. To an AI system building a picture of what this website is about, they are three different signals that do not reinforce each other.

Entity consistency is the practice of using the same label for the same concept, every time, across every page. It is not keyword stuffing, it is the difference between a confused entity graph and a coherent one. When an AI system can reliably identify that every reference to "Rima Taha, Global SEO & GEO Advisor" points to the same person with the same expertise and the same body of work, the confidence of any citation using her as a source increases substantially.

Passage structure is the complementary requirement. Each key section of your site, particularly on service pages and insights articles, should be able to function as an independent citation. It should open with a clear statement of its premise, develop that premise with evidence or argument, and close with a clear conclusion. This does not require shorter pages. It requires intentionally structured sections.

| Area | GEO-Ready | Not GEO-Ready |

|---|---|---|

| Schema markup | Person/Organization with sameAs links | Missing or incomplete schema |

| AI bot access | GPTBot, ClaudeBot, PerplexityBot allowed | Blanket disallow or CDN blocks |

| Rendering | Server-side or hybrid rendering | Client-side JS-only rendering |

| Entity naming | Consistent labels across all pages | Multiple terms for the same concept |

| Content structure | Self-contained, citable H2 sections | Long narrative with buried answers |

| Internal linking | Entity-reinforcing anchor text | Generic "click here" or "read more" |

Entity Visibility: Who You Are Across the Web

GEO readiness is not solely about what your website says. It is about what the broader web says about you, and whether those signals are consistent enough for AI systems to form a high-confidence understanding of who you are and what you are known for. This is entity visibility, and it extends beyond your own domain.

The most important external entity signals are LinkedIn, Google Business Profile (where applicable), and any third-party publications that include your name, title, and areas of expertise. When these sources use consistent language, the same name, the same job title, the same description of your work, AI systems encounter reinforcing signals from multiple independent sources. That consistency is what moves you from "a person mentioned on the web" to "a recognised entity with a coherent identity."

The sameAs property in your JSON-LD schema is how you bridge your own domain to those external signals. A Person schema that includes sameAs links to your LinkedIn profile, a Wikidata entry if one exists, and any authoritative directories where you are listed creates a structured map that AI systems can follow to verify and enrich their understanding of who you are. This is one of the highest-leverage technical changes a website can make for GEO.

GEO readiness is a combined technical and editorial problem. The technical layer creates the conditions for AI visibility. The editorial layer determines whether that visibility translates into confident, recurring citation. You need both, and they require different teams, different skills, and a different kind of ongoing audit.

Running a GEO Readiness Audit: Where to Start

A GEO readiness audit has four phases: technical access review (can AI bots reach and render your content), schema validation (are your structured data signals complete and accurate), entity audit (are your names and descriptions consistent across your site and the wider web), and content structure review (are your key sections independently citable). These phases can be run in sequence or in parallel depending on your team structure, but the technical access review should always come first, there is no point optimising content that AI crawlers cannot reach.

The most common finding in the technical access phase is not a deliberate block but an accidental one. CDN configurations, WAF rules, and rate-limiting policies written before AI crawlers existed often catch legitimate AI bots in filters designed for malicious scrapers. These are quick fixes once identified, but they require someone who knows to look for them.

The entity audit is often where the most work is concentrated, not because it is technically complex but because it requires editorial decisions about how you describe yourself and your work, followed by consistent implementation across every page on the site. This is the kind of work that cannot be automated. It requires human judgement about which descriptions are most accurate, most distinctive, and most likely to be used by the people asking questions that you want to answer.

Is your website ready for AI discovery?

The GEO-Ready Website service builds or audits your digital presence for the standards AI systems require, from schema to content structure to entity visibility.

Explore GEO-Ready Website →